You’ve just exported 500,000 rows from a database. Excel opens the file and freezes. Your laptop fan starts whirring like a jet engine. Meanwhile, your colleague opens the same data as CSV in a text editor and starts processing it with a Python script in seconds.

This scenario plays out daily in offices and dev environments worldwide. The choice between csv vs excel for data isn’t just about file format. It’s about matching the right tool to your workflow, dataset size, and processing needs.

CSV files excel at speed, simplicity, and automation for large datasets. Excel shines for human-readable analysis with formatting and formulas. Choose CSV for programmatic processing, data pipelines, and version control. Pick Excel when you need visual analysis, complex calculations, or stakeholder-ready reports. Understanding each format’s strengths prevents workflow bottlenecks and saves hours of processing time.

Understanding the fundamental differences

CSV stands for comma-separated values. It’s plain text. Each line represents a row. Commas separate the columns. That’s it.

Excel files (.xlsx or .xls) are binary containers. They store data, formulas, formatting, charts, macros, and metadata. A single Excel file can hold multiple worksheets, each with its own structure.

This fundamental difference drives every other distinction. CSV prioritizes simplicity. Excel prioritizes features.

File size tells the story clearly. A CSV with 100,000 rows of sales data might be 5MB. The same data in Excel could balloon to 15MB or more once you add column widths, cell colors, and a few formulas.

When CSV beats Excel for data workflows

CSV files load faster. Period.

If you’re working with datasets over 50,000 rows, the speed difference becomes obvious. Excel needs to parse the file structure, load formatting rules, recalculate formulas, and render the interface. CSV just reads text.

Command-line tools love CSV. Tools like awk, sed, grep, and cut can process millions of rows without breaking a sweat. Try that with an Excel file and you’ll need specialized libraries just to open it.

Version control systems work beautifully with CSV. Git can show you exactly which rows changed between commits. Excel files are binary blobs. A single cell edit creates a completely different file hash, making meaningful diffs impossible.

Automation pipelines prefer CSV. Your ETL scripts, data ingestion jobs, and API endpoints can read and write CSV without heavyweight dependencies. Excel requires libraries like openpyxl, xlrd, or pandas with extra modules.

Here’s a real scenario: you need to merge customer data from three sources, filter out duplicates, and upload to a database. With CSV, you write a 20-line Python script. With Excel, you’re wrestling with cell references, hidden rows, and formatting that breaks your parser.

Where Excel still wins

Excel makes data human-readable. Colors, borders, frozen headers, and column widths help stakeholders scan information quickly.

Formulas provide instant calculations. Need to sum a column? =SUM(A2:A1000) does it. CSV requires you to write code or import into another tool.

Data validation prevents errors. Excel lets you restrict cells to dropdown lists, date ranges, or numeric limits. CSV accepts anything. Your pipeline might crash when someone types “N/A” in a number column.

Charts and pivot tables turn data into insights. Executives don’t want CSV files. They want graphs, trend lines, and interactive summaries.

Multiple sheets organize related data. You can keep raw data, cleaned data, and summary statistics in one file. CSV forces you to manage separate files or concatenate everything into one messy table.

Speed comparison for common tasks

| Task | CSV Performance | Excel Performance |

|---|---|---|

| Opening 1M rows | 2-5 seconds in text editor | 30+ seconds or crashes |

| Finding specific text | Instant with grep | Slow search dialog |

| Batch processing 100 files | Seconds with scripts | Manual or complex VBA |

| Loading into Python/R | Fast with pandas | Slower, needs openpyxl |

| Version control diffs | Clean line-by-line changes | Binary blob, no useful diff |

| Sharing with non-technical users | Requires import step | Opens directly, looks nice |

Practical workflows for developers and analysts

Use CSV when you need to:

- Process data programmatically with scripts or pipelines

- Store data in version control alongside code

- Exchange data between different systems and platforms

- Handle datasets larger than Excel’s 1,048,576 row limit

- Minimize file size for storage or transfer

- Enable command-line data manipulation

- Feed data into machine learning models or statistical tools

Use Excel when you need to:

- Share reports with stakeholders who expect formatted tables

- Perform ad-hoc analysis with formulas and pivot tables

- Validate data entry with dropdowns and rules

- Create charts and visualizations for presentations

- Work with multiple related datasets in one file

- Collaborate with team members who aren’t comfortable with code

- Maintain audit trails with cell comments and change tracking

Converting between formats efficiently

Moving from Excel to CSV is straightforward. Open the file, click Save As, choose CSV. Done.

But watch out for these gotchas:

- Multiple sheets become separate CSV files

- Formulas convert to their current values, not the formula itself

- Formatting disappears completely

- Special characters might break if encoding isn’t UTF-8

Going from CSV to Excel is just as simple. Open Excel, import the CSV, save as .xlsx.

The real challenge is maintaining data integrity. Dates are the worst offender. Excel auto-converts text that looks like dates. “1-2” becomes January 2nd. “OCT4” becomes October 4th. Gene names like “SEPT1” have famously broken scientific research because Excel mangled them.

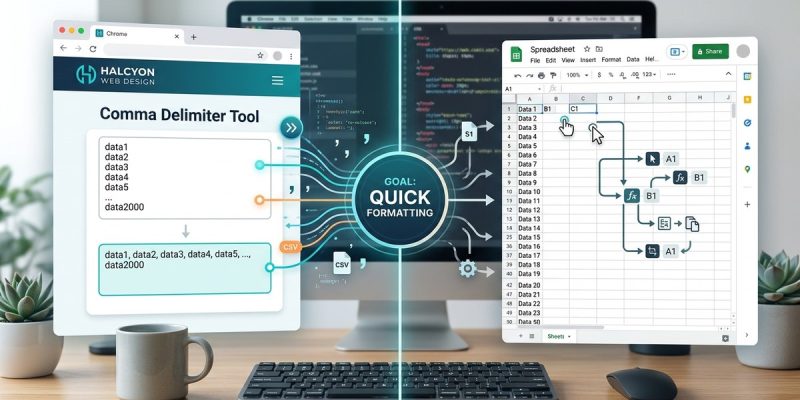

For bulk conversions, tools like Delimiter.site let you preview how your data will split before committing to a format, which helps catch encoding issues and delimiter problems before they corrupt your dataset.

When processing CSV files programmatically, always specify UTF-8 encoding explicitly. Default encodings vary by system and cause silent data corruption that’s painful to debug later.

Handling delimiter confusion

Commas aren’t the only option. Tab-separated values (TSV) use tabs instead. Pipe-delimited files use the | character. Some European systems use semicolons because commas indicate decimals there.

The delimiter matters when your data contains the delimiter character. Product descriptions with commas break comma-delimited files. Addresses with apartment numbers like “Suite 100, Building A” create extra columns.

Proper CSV files handle this with quotes. “Smith, John” stays as one field. But not all systems generate proper CSV. You’ll encounter files where delimiters appear unquoted, creating phantom columns.

Here’s how to diagnose delimiter issues:

- Open the file in a plain text editor

- Count the delimiters in the first few rows

- Check if counts match across rows

- Look for quoted fields containing the delimiter

- Verify the actual delimiter matches what you expected

If you’re generating CSV programmatically, use a proper CSV library. Don’t concatenate strings with commas. Libraries handle quoting, escaping, and edge cases correctly.

File size and performance at scale

CSV wins decisively on file size for pure data. No formatting overhead means smaller files.

But compression changes the math. Excel files compress well because they’re already using ZIP internally. A 50MB Excel file might compress to 8MB. A 50MB CSV might compress to 12MB because text has less redundancy.

For storage, compress both. For transmission, CSV is still faster because decompression happens quickly.

Loading speed depends on your tool. Pandas reads CSV faster than Excel in most cases. But Pandas with the pyarrow engine can read certain Excel files surprisingly fast.

Database imports heavily favor CSV. PostgreSQL’s COPY command can ingest millions of CSV rows per second. Importing from Excel requires conversion first.

Common mistakes that slow you down

| Mistake | Why It Hurts | Better Approach |

|---|---|---|

| Opening huge CSV in Excel | Crashes or extreme slowdown | Use command-line tools or specialized viewers |

| Not specifying encoding | Silent data corruption | Always declare UTF-8 explicitly |

| Using Excel for version control | Useless binary diffs | Convert to CSV for tracking changes |

| Storing formulas in CSV | Formulas become static values | Keep calculation logic in scripts |

| Mixing data types in columns | Parser errors and type confusion | Enforce consistent types per column |

| Forgetting to escape delimiters | Extra phantom columns | Use proper CSV libraries with quoting |

Automation and scripting considerations

CSV integrates seamlessly with every programming language. Python’s csv module is in the standard library. JavaScript, Ruby, Go, and Rust all have robust CSV parsers.

Excel requires third-party libraries. Python’s openpyxl works well but adds dependencies. Reading and writing takes more code and more memory.

For daily automated reports, CSV makes sense. Your cron job pulls data, generates CSV, uploads to S3. Simple.

For monthly stakeholder reports, Excel makes sense. You generate the data as CSV, then use a template Excel file with pre-built charts. Your script populates the data range, and the charts update automatically.

Chaining tools together favors CSV. Output from one script becomes input to the next. No format conversion needed.

Making the right choice for your project

Ask yourself these questions:

- Will this data be processed by code or viewed by humans?

- Does the dataset exceed 500,000 rows?

- Do you need to track changes over time in version control?

- Will non-technical stakeholders interact with this file?

- Does the data require formulas or just raw values?

- Are you building an automated pipeline or a one-time analysis?

Your answers point to the right format. Most projects benefit from both. Raw data lives in CSV. Reports and analysis happen in Excel.

Don’t force Excel into automation pipelines. Don’t force CSV onto executives who expect formatted tables.

Compatibility across systems and platforms

CSV is universal. Every database, every programming language, every spreadsheet application reads CSV. It’s the lowest common denominator.

Excel formats vary. .xls is the old binary format. .xlsx is the modern XML-based format. LibreOffice and Google Sheets handle both, but edge cases exist. Macros don’t transfer. Some formulas behave differently.

If you’re exchanging data with external partners, CSV eliminates compatibility questions. Send CSV and you know it’ll open correctly.

Cloud platforms prefer CSV. BigQuery, Redshift, Snowflake all have optimized CSV importers. Excel requires conversion or specialized connectors.

APIs typically output JSON or CSV. Rarely Excel. If you’re building an API that serves data, CSV is the standard choice.

Choosing the format that fits your workflow

The csv vs excel for data debate isn’t about one format being superior. It’s about matching capabilities to requirements.

CSV delivers speed, simplicity, and scriptability. Excel provides formatting, formulas, and human-friendly interfaces.

Use CSV for data pipelines, version control, and programmatic processing. Use Excel for stakeholder reports, ad-hoc analysis, and collaborative editing.

Most effective workflows use both. Extract data as CSV. Transform and load with scripts. Generate final reports in Excel.

Stop fighting your tools. Pick the format that makes your specific task easier, not the one that’s theoretically better. Your workflow will thank you.