Google’s Core Web Vitals have become essential metrics for website performance. If your site loads slowly or feels clunky, you’re losing visitors and search rankings. The good news? You don’t need to guess what’s wrong. The right measurement tools show you exactly where your site struggles and what to fix first.

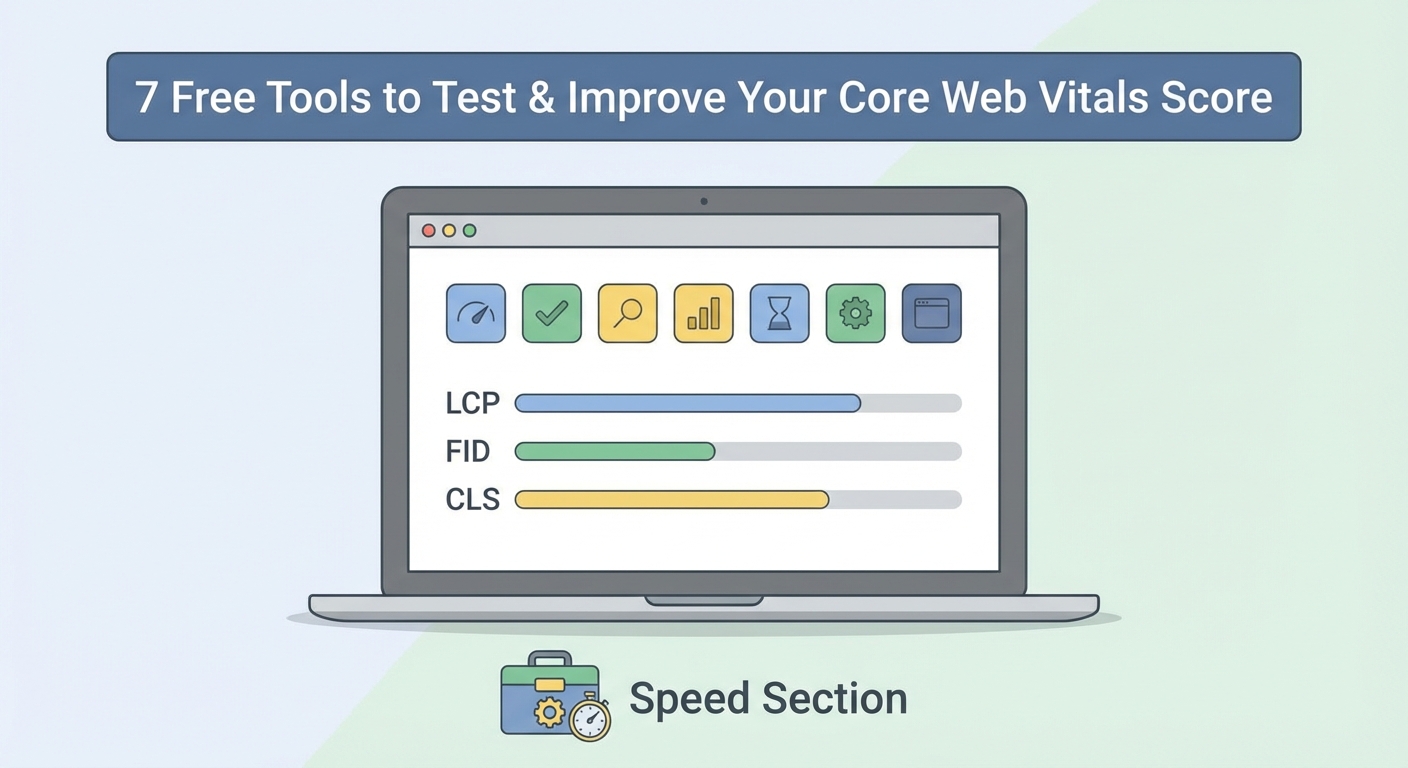

Core Web Vitals tools measure three critical metrics: Largest Contentful Paint (LCP), First Input Delay (FID), and Cumulative Layout Shift (CLS). Using the right combination of lab and field data tools helps you identify performance bottlenecks, prioritize fixes, and track improvements over time. Most professional tools are free and provide actionable recommendations you can implement immediately.

Understanding what Core Web Vitals actually measure

Core Web Vitals focus on three user experience metrics that Google considers essential.

Largest Contentful Paint (LCP) measures loading performance. It tracks how long it takes for the largest visible content element to appear on screen. This could be a hero image, a video thumbnail, or a large text block. Good LCP happens within 2.5 seconds of when the page starts loading.

First Input Delay (FID) measures interactivity. It captures the time between when a user first interacts with your page (clicking a button, tapping a link) and when the browser can actually respond. Pages should have an FID of less than 100 milliseconds.

Cumulative Layout Shift (CLS) measures visual stability. It quantifies how much your page layout shifts unexpectedly during loading. You know that annoying moment when you’re about to click something and the page jumps? That’s what CLS measures. A good score is less than 0.1.

These metrics matter because they directly affect how people experience your website. A slow LCP means visitors stare at a blank screen. Poor FID creates frustration when buttons don’t respond. High CLS causes misclicks and reading disruption.

Lab data versus field data tools

Performance tools fall into two categories, and you need both.

Lab data comes from controlled testing environments. These tools load your page in a simulated browser under specific conditions. They’re perfect for testing during development and comparing before and after changes. The downside? They don’t reflect how real users with different devices and network speeds experience your site.

Field data comes from actual visitors using your website. These tools collect performance metrics from real browsers in the wild. They show you what your users actually experience but can’t give you data until people visit your site. They also can’t test changes before you publish them.

The smartest approach uses both types. Lab tools help you identify and fix problems. Field tools confirm your fixes worked for real users.

Google PageSpeed Insights for comprehensive analysis

PageSpeed Insights combines lab and field data in one interface. Just enter your URL and wait about 30 seconds.

The tool shows you two sets of scores. The top section displays field data from the Chrome User Experience Report, showing how real Chrome users experienced your page over the past 28 days. Below that, you get lab data from Lighthouse, showing how your page performs in a simulated environment.

Each metric gets a color code. Green means good, orange needs improvement, and red requires immediate attention. The tool doesn’t just show scores. It provides specific recommendations ranked by potential impact.

For example, it might tell you to “eliminate render blocking resources” and list exactly which CSS and JavaScript files are causing delays. It shows you the potential time savings for each fix.

One limitation: PageSpeed Insights only tests one page at a time. Testing your entire site requires checking each important page individually.

Chrome DevTools for hands on debugging

Chrome DevTools puts performance testing directly in your browser. Press F12 (or right click and select Inspect) on any webpage.

The Lighthouse tab runs the same tests as PageSpeed Insights but with more control. You can test mobile or desktop, simulate different network speeds, and choose which categories to audit.

The Performance tab shows exactly what happens when your page loads. Record a page load and you get a detailed timeline showing when scripts run, when images load, and where delays occur.

The Coverage tab reveals unused CSS and JavaScript. Many sites load entire libraries when they only use a fraction of the code. This tab shows you exactly how much code goes unused, helping you identify optimization opportunities.

DevTools excels at debugging specific issues. Once you know you have a problem, DevTools helps you find the exact cause.

Search Console for tracking real user experience

Google Search Console shows how your entire site performs for real users over time.

The Core Web Vitals report groups your URLs into three categories: good, needs improvement, and poor. It tells you which pages fail each metric and groups similar pages together.

For example, if all your blog posts have similar CLS issues, Search Console groups them together and suggests the problem might be in your blog template rather than individual posts.

The report updates daily but uses a 28 day rolling average. This smooths out temporary spikes and gives you a realistic picture of typical performance.

Search Console helps you prioritize which pages to fix first. Focus on poor URLs that get the most traffic. Fixing your homepage matters more than fixing a page nobody visits.

Web Vitals Extension for instant feedback

The Web Vitals Chrome extension adds a small icon to your browser toolbar. Click it and you see real time Core Web Vitals metrics for whatever page you’re viewing.

The extension shows you metrics as you browse. LCP updates when the largest element loads. CLS updates as layout shifts occur. FID (or its replacement metric, Interaction to Next Paint) shows up when you interact with the page.

This tool shines during development. Make a change to your site, refresh the page, and immediately see if your metrics improved. No need to run a full audit or wait for reports to generate.

The color coding matches other Google tools. Green badges mean you’re passing. Red badges show problems that need fixing.

One caveat: the extension shows lab style data from your specific browsing session, not aggregated field data from real users.

CrUX Dashboard for historical trends

The Chrome User Experience Report (CrUX) Dashboard shows how your site’s performance changes over time.

Unlike other tools that show a snapshot, the CrUX Dashboard displays monthly trends going back several months. You can see if your recent optimizations actually improved real user experience or if a recent update made things worse.

The dashboard breaks down metrics by device type (phone, tablet, desktop) and connection speed. You might discover that your site performs great on desktop but poorly on mobile, or that slow 3G users have terrible experiences while everyone else does fine.

Setting up the dashboard requires a Google account and takes about five minutes. You connect it to the public CrUX dataset through Google’s Data Studio (now Looker Studio). Once configured, it updates automatically each month.

This tool works best for established sites with decent traffic. Low traffic sites might not have enough data in the CrUX dataset.

WebPageTest for detailed performance analysis

WebPageTest offers the most detailed performance testing available for free. It tests your site from real browsers in different locations around the world.

You can choose from dozens of test locations, multiple browsers, and various connection speeds. Want to know how your site performs for someone in Tokyo using Firefox on a slow 3G connection? WebPageTest can tell you.

The filmstrip view shows screenshots of your page loading frame by frame. You can see exactly when content appears and identify which elements cause delays.

The waterfall chart displays every resource your page loads, when it loads, and how long it takes. Each image, script, stylesheet, and font appears as a bar on the timeline. You can spot render blocking resources, oversized images, and slow third party scripts instantly.

WebPageTest also offers advanced features like testing with ad blockers enabled, simulating single point of failure scenarios, and running multi step tests that simulate user journeys.

The interface looks dated but don’t let that fool you. Professional performance engineers rely on WebPageTest for deep analysis.

Lighthouse CI for automated testing

Lighthouse CI integrates performance testing into your development workflow. It automatically tests every code change before it goes live.

Set up Lighthouse CI in your deployment pipeline and it runs Lighthouse tests on every pull request or commit. If a code change hurts performance, you find out before it reaches production.

The tool compares new test results against your baseline scores. If LCP increases by more than your threshold, the build fails and alerts your team. This prevents performance regressions from sneaking into your codebase.

Lighthouse CI requires more technical setup than browser based tools. You need to configure it in your build system (GitHub Actions, GitLab CI, Jenkins, etc.). The documentation provides step by step instructions for popular platforms.

Teams serious about maintaining performance over time find this automation invaluable. Manual testing catches some issues. Automated testing catches everything.

Choosing the right tool combination

Different tools serve different purposes. Here’s how to combine them effectively.

| Tool Type | Best For | Frequency |

|---|---|---|

| PageSpeed Insights | Initial assessment and general optimization | Weekly or after major changes |

| Chrome DevTools | Debugging specific performance issues | During active development |

| Search Console | Monitoring site wide trends and identifying problem pages | Daily review of reports |

| Web Vitals Extension | Real time feedback during development | Continuous during coding |

| CrUX Dashboard | Long term trend analysis and executive reporting | Monthly review |

| WebPageTest | Deep analysis of complex performance problems | As needed for difficult issues |

| Lighthouse CI | Preventing performance regressions | Automated on every code change |

Start with PageSpeed Insights to get your baseline scores and initial recommendations. Fix the obvious issues it identifies.

Set up Search Console to monitor your entire site’s performance with real user data. Check it weekly to catch new problems early.

Install the Web Vitals Extension for immediate feedback as you make changes.

Use Chrome DevTools when you need to debug why a specific metric fails. The detailed timeline and coverage data help you find root causes.

Add WebPageTest when you face complex issues that other tools don’t explain clearly. Its detailed waterfall and filmstrip views often reveal problems other tools miss.

Consider Lighthouse CI if you have a development team making frequent changes. Automated testing prevents your hard won performance gains from eroding over time.

Common mistakes when using performance tools

Testing only your homepage gives you an incomplete picture. Blog posts, product pages, and category pages often have different performance characteristics. Test your most important page types.

Testing only on fast networks and powerful devices creates false confidence. Most of your visitors probably use mid range phones on cellular connections. WebPageTest and Chrome DevTools let you simulate realistic conditions.

Focusing on lab scores while ignoring field data misses real user problems. A page might score perfectly in PageSpeed Insights but still fail for actual visitors dealing with slow servers, poor caching, or third party script delays.

Making too many changes at once prevents you from knowing what actually helped. Change one thing, test it, confirm improvement, then move to the next optimization.

Ignoring the recommendations these tools provide wastes their value. The tools don’t just show scores. They tell you exactly what to fix and estimate the impact. Follow their guidance.

Testing without clearing your cache gives you unrealistic results. Your browser caches resources after the first visit, making subsequent loads much faster. First time visitors don’t have that advantage. Use incognito mode or clear your cache before testing.

“The best performance tool is the one you actually use consistently. Pick a workflow that fits your team’s process and stick with it. Sporadic testing finds sporadic problems. Regular monitoring catches issues before they impact users.” — Web Performance Expert

Interpreting scores and setting realistic targets

Perfect scores look impressive but aren’t always necessary or practical. A score of 90 often delivers the same user experience as a score of 100, especially if you’ve addressed the metrics that matter most to your users.

Different page types have different performance characteristics. Your homepage might achieve a 95 score while your blog posts hover around 85. That’s normal if the blog posts include more images and embedded content that users value.

Focus on passing the Core Web Vitals thresholds rather than chasing perfect scores. Getting LCP under 2.5 seconds matters more than getting it to 1.2 seconds if you’re currently at 4 seconds.

Field data trumps lab data when they conflict. If PageSpeed Insights shows a lab score of 60 but your field data shows 95% of real users have good experiences, your site is probably fine. Lab conditions don’t always reflect real world usage.

Track trends over time rather than obsessing over daily fluctuations. Performance scores vary based on server load, third party services, and testing conditions. A drop from 92 to 88 over one day probably doesn’t mean anything. A steady decline from 92 to 78 over two months signals a real problem.

Fixing issues the tools identify

Most tools provide recommendations ranked by impact. Start with the high impact items that save the most time.

Image optimization usually offers the biggest gains. Compress images, use modern formats like WebP, implement lazy loading for below the fold images, and specify image dimensions to prevent layout shifts.

JavaScript optimization comes next. Defer non critical scripts, remove unused code, split large bundles into smaller chunks, and load third party scripts asynchronously.

CSS optimization reduces render blocking. Inline critical CSS, defer non critical stylesheets, remove unused styles, and minimize CSS files.

Server response time affects every metric. Use a content delivery network (CDN), implement caching, upgrade your hosting if needed, and optimize database queries.

Font loading causes both layout shifts and delays. Use font display swap, preload critical fonts, and limit the number of font variants you load.

Third party scripts often cause the worst performance problems. Audit what you’re loading, remove unnecessary scripts, use facades for heavy embeds (like replacing a full YouTube embed with a thumbnail until clicked), and load analytics asynchronously.

Test each fix individually to confirm it helps. Sometimes a recommended optimization doesn’t improve your specific situation or even makes things worse. Measure, change, measure again.

Making performance testing part of your routine

Set up a testing schedule that matches your update frequency. If you publish new content daily, check your key pages weekly. If you rarely change your site, monthly checks suffice.

Create a spreadsheet tracking your scores over time. Record the date, tool used, page tested, and each metric score. This simple log helps you spot trends and prove the value of optimization work.

Assign someone to monitor Search Console’s Core Web Vitals report. When new issues appear, investigate immediately before they affect many pages.

Test before and after major changes. Adding a new plugin, changing themes, or updating your CDN can impact performance. Baseline testing helps you catch problems early.

Include performance testing in your content creation workflow. Before publishing a new blog post or product page, run it through PageSpeed Insights. Fix obvious issues before the page goes live.

Share performance data with your team. When everyone sees the scores and understands why they matter, optimization becomes a shared priority rather than one person’s concern.

Measuring success beyond the numbers

Better Core Web Vitals scores should translate to better business results. Track metrics that matter to your goals alongside your performance scores.

Monitor bounce rate for pages you optimize. If your LCP improves from 4 seconds to 2 seconds but bounce rate doesn’t change, something else might be driving visitors away.

Watch conversion rates on optimized pages. Faster checkout pages should convert more visitors. Faster landing pages should generate more leads.

Check average session duration and pages per session. Better performance often keeps visitors engaged longer and encourages them to view more pages.

Review your search rankings for important keywords. Google confirmed Core Web Vitals affect rankings, but the impact varies by query and competition.

Survey user satisfaction if possible. Sometimes the metrics improve but users don’t notice, or users feel a dramatic improvement from a modest metric change.

These business metrics validate that your performance work delivers real value beyond better scores.

Keeping up with changes and updates

Google updates Core Web Vitals metrics and thresholds occasionally. FID is being replaced by Interaction to Next Paint (INP) in 2024. Stay informed about these changes.

Performance tools add new features regularly. PageSpeed Insights, Chrome DevTools, and Search Console all improve their reporting and recommendations over time.

Follow the Chrome Developers blog and web.dev for official updates. These sources announce metric changes, new tools, and best practices directly from the teams building these tools.

Join web performance communities to learn from others facing similar challenges. The Web Performance Slack, Reddit’s r/webdev, and various Discord servers share practical tips and troubleshooting advice.

Attend web performance conferences or watch recorded sessions. Conferences like performance.now() and Chrome Dev Summit showcase cutting edge techniques and case studies.

Your path to better performance starts now

You don’t need to master every tool immediately. Start with PageSpeed Insights to see where you stand. Install the Web Vitals Extension for ongoing feedback. Set up Search Console to monitor real user experiences.

Pick one problem the tools identify and fix it this week. Maybe it’s compressing your images or deferring a heavy script. Make the change, test again, and see the improvement.

Performance optimization isn’t a one time project. It’s an ongoing practice. The tools covered here make that practice manageable and even rewarding. Each improvement makes your site faster for real people trying to read your content, buy your products, or use your services.

The best time to start measuring was when you launched your site. The second best time is right now.